The first biweekly issue (AI, Law, and Otter Things #18)

Hello, dear reader, and welcome to a brand new issue of AI, Law, and Otter Stuff. Today's newsletter is relatively light in reading recommendations, but for good reasons. Instead, this newsletter brings you a rant on these reasons, some reflections about a piece of Dune backstory from the perspective of AI regulation, and links to open calls for papers and forthcoming events.

(Attention conservation notice: this issue ended up being a bit longer than the previous ones, but its parts are mostly independent of one another.)

Those kilometers and the red lights

Over the past two weeks, most of what I read was directed towards two goals. These readings turned out to be very stimulating, but they do not leave me with much room to recommend things that might be of general interest here. Still, I would welcome any suggestions for further reading on the topics below!

The first driver for my recent readings is the need to address some gaps in my knowledge of European Union law. While I had read some introductory materials on EU law, most of my engagement with the subject was centred on data protection law. As the AI Act proposed by the European Commission occupies a sizeable chunk of my research plans, I have decided to seize the opportunity and familiarise myself with some specialised topics that are relevant to my approach. In addition to the future-proofing and impact assessment topics I have mentioned before, my recent readings have taken me into the New Legislative Framework and the role of values in the EU legal order, among other topics. As soon as I develop a bit more my ideas, I might be able to make some informed recommendations, but in the meantime, I appreciate any pointers on that.

(And a recommendation of a book-length treatment of EU history would also interest me greatly).

The second, and most immediate factor, driving my recent reading is that I have finally managed to get some serious-ish writing done. I was not content with my writing outputs for a few months now: everything felt like an inchoate treatment of a subject or a repetition of something that I had already said elsewhere. The former scenario is annoying, especially when one has an over-inflated opinion of one's writing skills. Still, it is inevitable, I think: the first step towards a good text is writing a lousy text and all that. So, despite my complaints over the past weeks, now I have some very rough drafts that I can develop in the future.

Repetition, on the other hand, troubles me a lot. As I mentioned in the last issue, I do not see myself as the kind of scholar that spends their career writing the same paper. This focused approach can be incredibly valuable if done right, as it sharpens definitions and refines existing knowledge. But I am not a particularly good hedgehog, and not just because of my lack of sympathy for Dworkin's approach to justice. And I felt that acutely in my recent writing, as I was not even happy with the outputs. If one will write the same thing again and again, one should at least do a better job after each iteration.

(Since I have spoken of repetition, I feel obliged to mention King Crimson's Indiscipline. I should have had this idea two weeks ago and added it as one of the vignettes. Instead, I will just leave you with a link to one of my favourite versions of this song.)

This week, however, was a bit different. After some conversations at the EUI, I felt inclined to revisit my previous approach to human intervention in automated decision-making, as Article 14 of the AI Act brings some interesting novelties on that front. As I sat down to do so, I finally felt like I had something new to say about the subject, leading to a draft I was not entirely unsatisfied it. That is not to say that the text is good already, and I should actually get the opinions of some colleagues on the matter. But at least the text itself does not read like a remix of the stuff sitting in my "Drafts" folder. And so, a large chunk of my reading this week ended up dedicated to checking references for the draft, leaving little space for things that I can disclose at this point.

Lessons from the Butlerian Jihad

Despite the vital role Dune played in my intellectual formation, I was not really sure I would watch the new movie. For starters, I am not really an enthusiast of the seventh art, and my previous experiences with Denis Villeneuve's science fiction movies were not very positive. To further complicate things, my Green Pass troubles meant that I missed a good chunk of the movie's theatrical run in Italy.

At the end of the day, my fanboy side took control, and I bought the movie at a streaming service. I have to admit it was a good adaptation, though it might have been confusing to somebody who was having their first contact with the story. This is why I was happy that Villeneuve decided to do a two-part adaptation rather than fit the book into a single film (and even more so with the rumours of a Dune Messiah adaptation).

Even with two (or three) films, the cinematic version had to cut a lot of Dune's background narratives. In most cases, this was a wise decision (but, if you wonder why people use swords in the far future, check this). But it meant diluting or even erasing many of the book's underlying themes, such as the role of Islam and religion in general, the ecological transformation of Arrakis, and the topic I want to explore here: the Butlerian Jihad.

One of the striking features of the Dune universe is that there are no computers whatsoever. From a meta-narrative point of view, this follows from Frank Herbert's interest in centring his narratives on human characters instead of the robots and intelligent computers that are so common in science fiction. To justify this choice within the narrative universe, Herbert posited the aforementioned jihad: an event in which humans rebelled against AI oppression and decided to ban any form of "thinking machines".

Consequently, many of the tasks other sci-fi universes delegate to machines are performed by living beings. Spaceship navigation is done by (not quite) human navigators from the Spacing Guild, which rely on the prescience granted by the spice melange to safely guide ships on faster-than-light travel. Administrative and tactical tasks are performed by mentats trained to act as human calculators and memory repositories. These and other human professions meant to replace AI systems require extensive training, monopolised by diverse political actors, thus reinforcing the feudal social structures that characterise the Dune universe.

As I argued in a previous issue, sci-fi can provide us with valuable frames to the contemporary challenges technology raises. But what can we learn about AI regulation by looking at an AI-free universe, especially one written nearly 60 years ago? The first thing we gain from this is a reminder that our concerns about risk from AI are nothing new. In the appendices of the original 1965 book, the Butlerian Jihad was cast as a rebellion against the "God of machine-logic" that culminated in the principle that "Man may not be replaced". Frank Herbert by no means was the first to make such critiques, and indeed the name "Butlerian Jihad" is a throwback to Samuel Butler's Erewhon. Still, throughout the books, it is not hard to find condemnations of AI that are very close in reasoning (if not language) to contemporary critique.

The absence of AI in Dune also provides an extreme example of regulatory effects in a fictional universe. As a good science fiction writer, Herbert was well aware that a universe without artificial intelligence technologies would not develop in the same way as other books envisioned. But, while some commenters see the book's feudal society as an implausible choice motivated by aesthetic factors, I would argue (as I did elsewhere) that it is, in fact, a thought experiment on the relationship between technological change and the centralisation of power. Even though a Butlerian Jihad seems unlikely in the real world, Herbert's efforts in understanding the long-term effects of norms on technology should remind us, at the very least, of the importance of broadening our imaginaries when contemplating the potential consequences of regulatory choices.

Since I have already rambled for too long on this topic, I will conclude by pointing out that the Butlerian Jihad also provides a good illustration of the relationship between AI technologies and power. Within the Dune universe, the Jihad is presented as liberating humans from AI-mediated domination. In the 1965 book, Reverend Mother Gaius Helen Mohiam (better known for applying the Gom Jabbar to Paul Atreides, the protagonist) states that "Once men turned their thinking over to machines in the hope that this would set them free. But that only permitted other men with machines to enslave them". Yet, banning AI led to the development of human flourishing technologies, which, in turn, enabled new forms of domination. This does not mean AI regulation is pointless, especially if we remember that the skills displayed in the book by humans are biologically impossible in real life. Instead, it highlights that power is a fluid thing: old actors might find new ways to reassert their authority, while prohibitions might open possibilities for new actors to seize power. A point that technology regulators need to keep in mind in their practice.

None of these lessons, of course, are exclusive to fiction, and an attentive reader could probably point out to various scholars and activists making the same points in real-world contexts. Nevertheless, pointing out these issues in a fictional universe might be helpful to make us look at things from a different angle. And it would be unwise to overlook the emotional impact that such narratives can have, especially when it comes to people that have not felt the worst aspects of automation in their lives. So, by all means, one should look beyond science fiction in dealing with problems with the real world. But stories might be an excellent place to start your journey.

Events and Calls for Papers

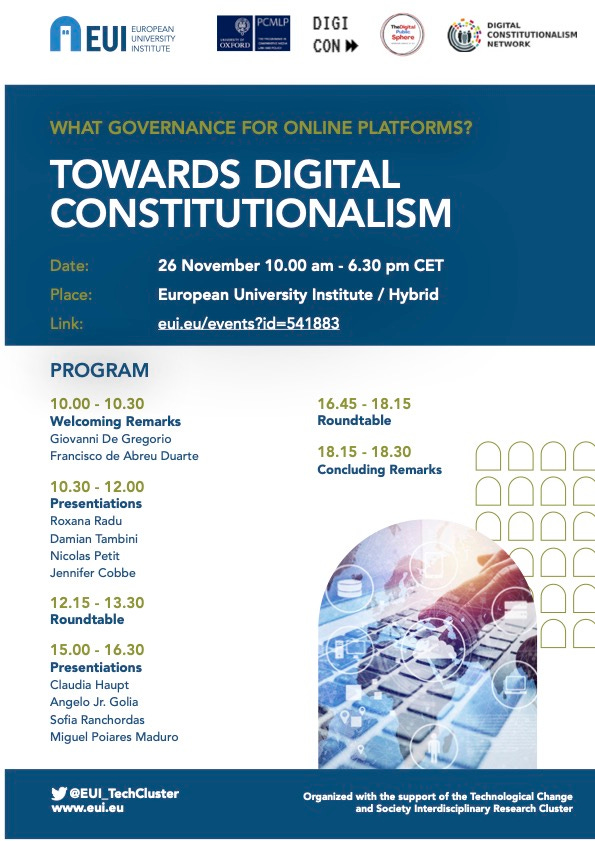

A few issues ago, I shared the Call for Papers for a conference on Digital Constitutionalism. The event will happen on November 26, from 10.00 to 18.30 CET (UTC+1), and Giovanni de Gregorio has tweeted the programme and link for registration:

There are at least two interesting workshops with deadlines on 19 November 2021. The YRLC at UHasselt has an open call for abstracts on Moving forward: Building societal resilience through law. They are calling for 400-word abstracts, and the conference itself will take place on 17 December as a hybrid event. The same deadline applies to this interdisciplinary being organised by the University of Tübingen on AI and Democracy, which asks for a short abstract (max. 200 words) connecting your research with the workshop topic. The event itself will take place from 29 to 31 January 2022.

The TMC Asser Institute has a call for papers on the Law and ethics of AI in the public sector. Submissions of abstracts up to 300 words are due by 3 December, and full papers of 3000-8000 words are expected by 25 February.

For a longer time horizon, FAccT 2022 has extended its call for papers due on 14 January 2022, but you have to submit an abstract by 15 December 2021. Papers are expected to be of publication-ready quality (Computer Science is traditionally a conference-oriented discipline), but they welcome contributions from a broad range of disciplines dealing with themes of fairness, accountability, and transparency.

Finally, the Florence School of Regulation has opened a call for papers for its 11th Annual Conference. The next edition's theme is "From Data Spaces to Data Governance", and they are accepting abstracts until 31 January 2022.

Closing words

Since many of you are Twitter users, I strongly recommend you follow the official profile of the Technological Change and Society interdisciplinary cluster. Its handle is @EUITechCluster, and there we share information about the cluster's events, news items about our topics of interest, and relevant research.

And now, to finish this issue, I leave you with the mandatory otter picture. See you around!